IEB HPC Cluster Overview¶

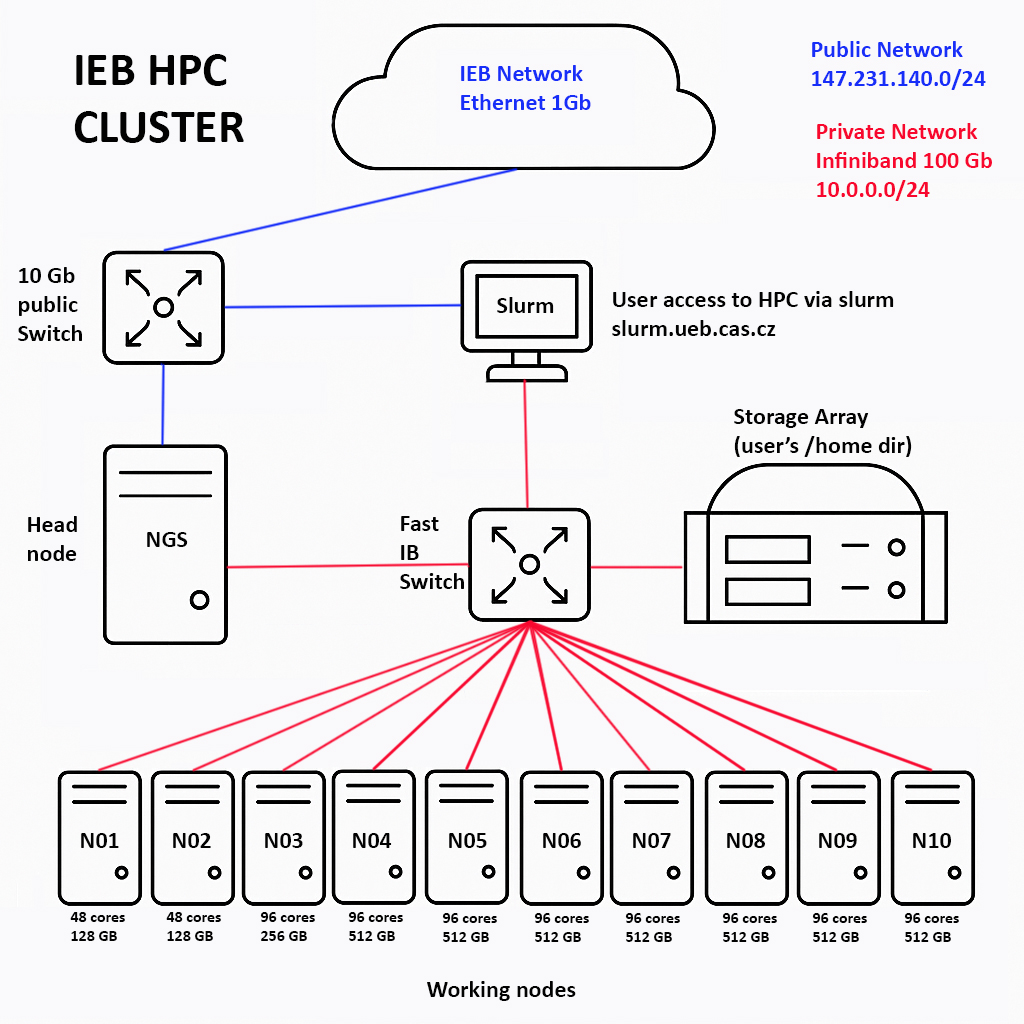

This page summarizes the physical/logical layout of the IEB HPC cluster as shown in the diagram below and lists the key resources and interconnects.

Topology at a glance¶

- Head node:

NGS— connected to the public network via a 10 Gb Ethernet switch and to the private fabric via 56 Gb InfiniBand. - Login/Slurm node: users access the system through Slurm at

slurm.ueb.cas.cz(blue line to public LAN, red line to IB fabric). - Private fabric: InfiniBand 56 Gb switch interconnects the login node, head node, storage, and all compute nodes.

- Public LAN: IEB network is only Ethernet 1 Gb; external/public subnet 147.231.140.0/24.

- Private cluster subnet: 10.0.0.0/24 carried over InfiniBand.

- Storage array: provides users’

/homedirectories; attached to the IB switch for high throughput. - Compute nodes: ten nodes (N01–N10) attached to the IB switch.

Aggregate capacity: 864 CPU cores, ~4 TB RAM across N01–N10.

Networks & switching¶

- Public network path (blue): users reach the login/Slurm node via the institute’s 1 Gb Ethernet; the head node has a 10 Gb switch uplink. Unfortunately, since IEB network still operates at 1 Gb Ethernet, the end-to-end throughput is typically limited by that segment.

- Private high‑speed fabric (red): 56 Gb InfiniBand switch provides low‑latency, high‑bandwidth connectivity among login node, head node, storage, and all compute nodes.

- Addressing: public 147.231.140.0/24; private 10.0.0.0/24.

Access¶

- Submit and manage jobs via Slurm on

slurm.ueb.cas.cz. - See also: Partitions & time limits and Slurm examples.

Storage¶

- Users’

/homedirectories reside on the Storage Array attached to the IB fabric for fast I/O to all nodes. User quota limit is implemented.

Note: This page describes the hardware and network layout. Operational policies, partitions, and job templates are documented on the linked pages above.